I spent a weekend writing a small Rust tool that composites AI company logos onto a Redbubble full-print t-shirt canvas. Not because anyone asked. Halfway through tuning the layout I noticed something faintly absurd: every logo I was tiling looked like a tyre tread. OpenAI’s swirl is a directional rain pattern. Gemini’s four-pointed star is a Bridgestone Blizzak winter sipe. Anthropic’s ringed A is a sidewall stamp. Nvidia’s eye is a hub cap. Lay them next to each other on a 7000-pixel canvas and the whole thing reads less like an industry portrait and more like a Beaurepaires catalogue page.

Logos From Loops

The visual grammar of AI companies has converged on a small family of forms: radial symmetry, interlocking arcs, gradients from cool to warm, the occasional offset chevron. They are tread patterns. They are tread patterns because tread patterns are what you draw when you want to suggest motion, grip, and continuous contact with a surface you are moving over – which is exactly the brand promise of a model that turns prompts into outputs without you needing to know how the road underneath works.

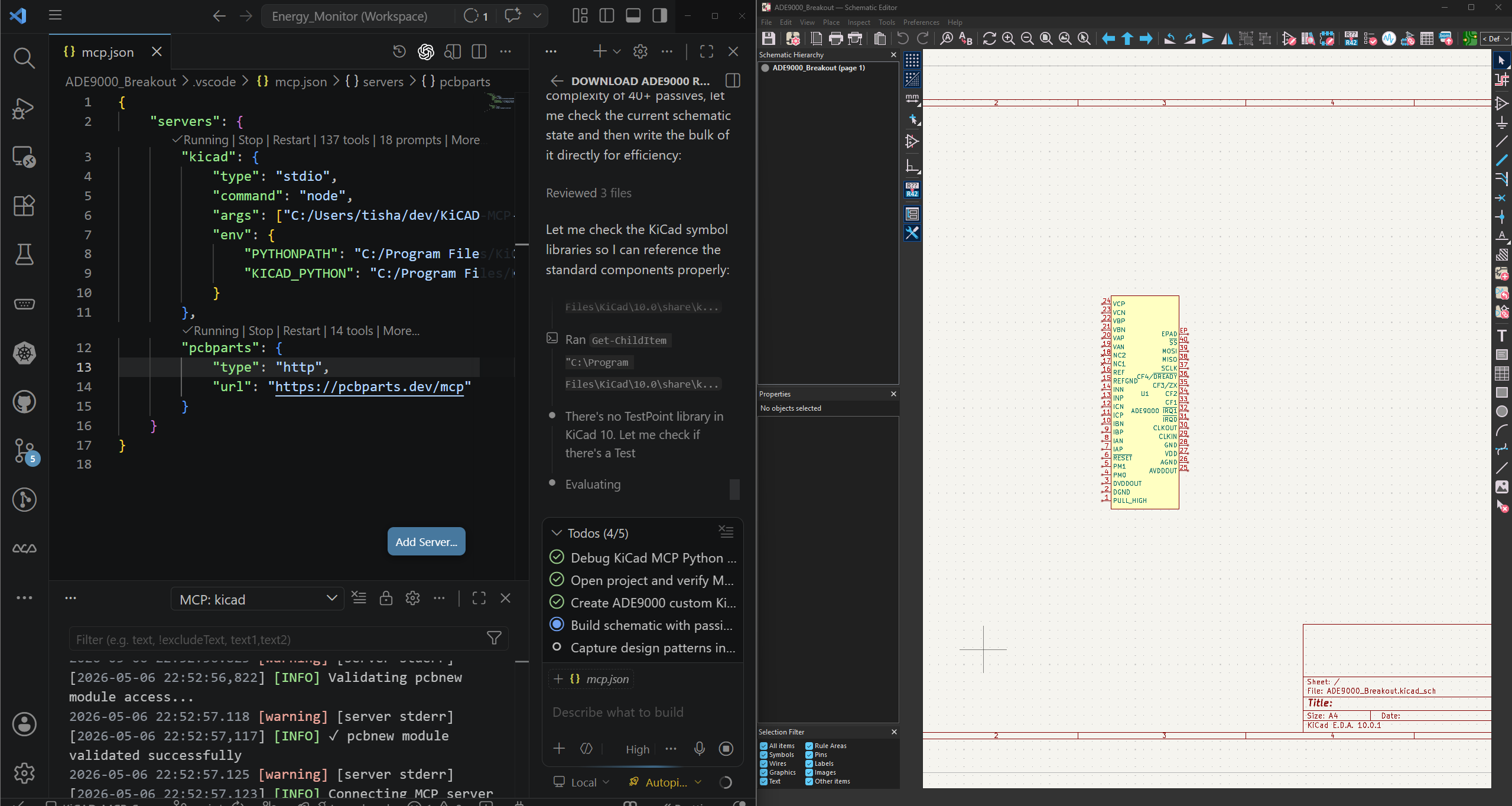

The tool generates 21 of these tread-pattern logos – the leaders, challengers, niche players, and visionaries from the April 2026 Gartner Magic Quadrant for Enterprise AI Coding Agents, plus the dozen companies tracked by isaiprofitable.com – and tiles them across the canvas under four layouts: a jittered grid, a hex pack, an arabesque mask built from Lissajous curves under a wallpaper symmetry group, and a Voronoi packer that wraps cleanly at the edges. The arabesque mode is the one that looks most like a real design, which is fitting because the brand identities themselves are arabesques of the same underlying motif.

Nothing in the code is novel. What is striking is how little code it takes to produce something that passes for a branding agency deliverable. Spend a few minutes tweaking curve density and symmetry group and you can cover most of the Fortune 500’s recent AI rebrand portfolio without engaging a designer. None of it requires aesthetic judgement. All of it requires a loop.

That is worth sitting with before moving on to the money.

The Gartner Thermometer and the Sequoia Deficit

The 2024 and 2025 Gartner Hype Cycles placed generative AI at or near the Peak of Inflated Expectations, the top of the curve before the long slide into the Trough of Disillusionment. That topological framing is rough but useful. More arresting is the arithmetic underneath it.

Sequoia Capital published a piece in mid-2024 estimating that AI infrastructure – primarily Nvidia GPU clusters – required roughly $600 billion in annual revenue to justify the capital being committed, while observable AI-attributable revenue across the industry was a fraction of that figure. The gap has a name in finance: “productive deployment lag.” In plain language: the shovels are being sold, the mines are being dug, and the gold has not appeared at the rate the tunnel length implies it should. On isaiprofitable.com, the only company with a green checkmark next to its name is Nvidia, the one selling the shovels.

The 21-tread-pattern t-shirt is a small monument to this gap. Nvidia at the hub, eleven others on the sidewall burning through capital at rates that make the GPU revenue arithmetic interesting, one wallpaper symmetry group holding them all together on a cotton substrate.

The more interesting question is not whether AI is profitable yet. It is why AI is already affecting how we think, even before the P&L sheets catch up.

The Fur Analogy and Cognitive Shedding

Around 1.5 million years ago, Homo erectus started losing body hair. The proximate cause was almost certainly thermoregulation: an upright, running hunter on the African savannah generates core heat faster than a fur coat can dissipate it under midday sun. So the fur went. But heat still needed managing. The solution was clothing, an external thermal regulation artifact that did the job the body had previously handled internally.

This is a pattern that runs through the entire history of human technology. We replace innate biological functions with external artifacts. We then build manufacturing chains around those artifacts. We build economic systems around those chains. The original biological capacity atrophies from disuse or is simply never developed in the first place, because the artifact is already there when the individual arrives.

We do not have hooves. We have shoes. We do not have gills. We have submarines. We do not have the magnetoreceptors that birds use on migration routes. We have GPS. In each case the external artifact is not merely a substitute; it is a platform. It invites elaboration. The shoe becomes the boot, the boot becomes the orthotic, the orthotic becomes a running shoe industry, and the running shoe industry eventually funds the biomechanics research that feeds back into the next generation of the artifact.

I spent six months in late 2022 and early 2023 as an Enterprise Architect contractor at Bridgestone Australia in Adelaide, wiring up the interfaces between SAP, Salesforce, and the mobile mechanic booking platforms that schedule a van to a customer’s driveway to swap a set of tyres. The work was unglamorous integration plumbing – canonical data models, idempotent message contracts, the usual distributed-systems hygiene – but it gave me a tourist’s window into how a tyre and car parts business actually operates. Every node in the system was eventually a physical thing: a passenger radial sitting on a pallet in a Wingfield warehouse, a wheel alignment slot at a Beaurepaires bay, a truck retread coming back from its second life on a B-double. The software was a thin coordination layer over a very heavy industrial substrate.

The history of the company that owns that substrate is the part that stuck with me. Bridgestone – ブリヂストン – started in 1931 not as a tyre company but as a spinoff of a 久留米 (Kurume), 九州 (Kyushu) maker of 足袋 (tabi) split-toe footwear. The founder, 石橋正二郎 (Shojiro Ishibashi), had been adding rubber soles to cotton tabi since 1923 under the Asahi brand, and rubber soles turned out to be the gateway material to rubber tyres. The name Bridgestone is a literal English inversion of his surname: 石 (ishi, stone) and 橋 (hashi, bridge), reversed and translated, picked partly because it sounded export-ready and partly to echo Firestone, the American tyre company he admired. (Bridgestone would eventually acquire Firestone in 1988 for $2.6 billion, which is the kind of historical loop that only happens in long-lived industrial companies.) The katakana spelling ブリヂストン preserves an archaic ヂ where modern Japanese would write ジ, a piece of pre-war orthography the brand keeps the way Mitsubishi keeps its three diamonds.

What I took away from those six months is that Bridgestone is not really a tyre conglomerate that used to make shoes. It is a rubber compounding and supply-chain organisation that noticed rubber was useful for several different external human exoskeletons – first feet, then cars, then conveyor belts, hoses, seismic isolators under buildings, and now sustainably-sourced guayule and dandelion-derived natural rubber to hedge against Hevea brasiliensis geopolitics. The material is the through-line. The product is whichever exoskeleton the century happens to need.

I always jokingly refer to BridgeStone as - “Big Rubber”.

The Car Was Always an Exoskeleton

The automotive vocabulary has colonised AI discourse so completely it has become invisible. We “steer” language models. We apply “guardrails.” We talk about “autopilot” and “co-pilot” and “lanes” and “on-ramps.” There is a vehicle for sale right now whose entire value proposition is that the steering wheel becomes optional once the AI is mature enough. Almost nobody stops to ask why this particular metaphor landed so naturally.

Here is one answer: cars and AI are both exoskeletons.

A car is not a vehicle in the narrow sense. It is a kinetic exoskeleton that extends the range, speed, load-bearing capacity, and weather tolerance of a soft-bodied primate who would otherwise top out at 5 km/h on a good day. The car does not move instead of the human; it extends the human’s movement by several orders of magnitude at the cost of an elaborate support infrastructure: roads, fuel networks, insurance markets, traffic law, emissions regulation, and, yes, tyre companies.

AI is doing the same thing to cognition. The language model does not think instead of the human; it extends the human’s language-generation and pattern-retrieval capacity by several orders of magnitude at the cost of an equally elaborate support infrastructure: GPU clusters, training pipelines, safety teams, regulatory frameworks, and eventually a Gartner hype cycle or two. The car vocabulary arrived so naturally because the thing being described was already familiar. We built the kinetic exoskeleton first. The cognitive one came later, but the shape of the dependency relationship – human plus artifact plus infrastructure – is identical.

What Gets Shed

The cognitive functions most visibly affected are the ones that involve generation under uncertainty: drafting a first sentence, naming a variable, composing an email, sketching a logo. These are precisely the tasks that feel slow and effortful without assistance, and precisely the tasks that AI handles smoothly. The friction is real. The assistance is real. The atrophy risk is also real.

Musicians who practise scales develop neural pathways that do not form if the scales are skipped. Writers who struggle through first drafts develop a tolerance for ambiguity that does not develop if a model always resolves it for them. The logos from the code above look entirely plausible. They look like every other AI logo in the current design vocabulary. They were generated without any cognitive friction whatsoever, which is both the point and the problem. The friction was where the design decision lived.

This does not mean AI assistance is bad, any more than wearing shoes is bad. It means the physiotherapy question matters: are you also doing the cognitive equivalent of the exercises that keep the underlying capacity from going the way of the body hair or your quads since you have spent 2 days chasing the zebra you speared and are waiting to run out of steam and die.

The Shoe Company Principle

Ishibashi’s insight in 1931 was not that feet needed covering; the Asahi tabi side of the business already had that locked up. His insight was that rubber was a platform material for exoskeletal extension, and that the same compounding science, vulcanisation know-how, and distribution muscle that made a good rubber-soled tabi could make a good passenger tyre. He followed the material from one form of human locomotion extension to the next, and a hundred years later that bet is still paying out across tyres, conveyor belts, and seismic isolators.

The AI companies following the GPU stack from language generation to reasoning to planning to embodied robotics are doing exactly this. The H100 is the rubber: a platform material whose applications are not exhausted by the first product built on it. The chat interfaces, the co-pilots, the logo generators – these are the 足袋. What comes next on that manufacturing chain – the 自動車 (jidousha, automobile) tyre equivalent for cognition – is the part worth watching, and worth surviving the trough of disillusionment for.

Whether the current crop of AI businesses is profitable by the time the next Gartner report drops is the wrong question. Whether the infrastructure being built can outlast the hype cycle long enough to produce the genuine platform shift is the question Ishibashi would have asked, probably while measuring rubber tensile strength in a factory that used to make footwear. Japanese has a phrase for that patient industrial cultivation: 改善 (kaizen, continuous improvement). It is not the same word as ハイプ (haipu, hype), and the two have rarely been observed in the same building.

The last shoe company that asked the wrong question about its own product is still making pneumatic rubber cylinders for the machines that replaced the horses we no longer needed to breed. Every AI logo on the t-shirt is a tread pattern drawn by people who do not yet know which century of exoskeleton they are designing for. That beats a PowerPoint ecosystem built around the profitability question, and it is the bet I would rather be inside of when the trough arrives.

The code is at ai_logos_shirt – a small Rust crate, MIT licensed, build instructions in the README. Ping me if you have strong opinions about cognitive atrophy, the Sequoia revenue gap, or split-toe rubber footwear.